projects

Portfolio of former and current projects.

Real-time Voice Conversion and Speaker Design

As part of my doctoral research, I developed a real-time voice conversion system implemented within the Unity game engine via the JUCE/C++ framework. The system enables novel voice synthesis through the manipulation of perceptual speech characteristics, including 'gender' and 'age' attributes, as well as fine-grained prosodic control over pitch parameters. You can try an interactive demonstration on .

Danish Speech Recognition — Fine-tuning Whisper

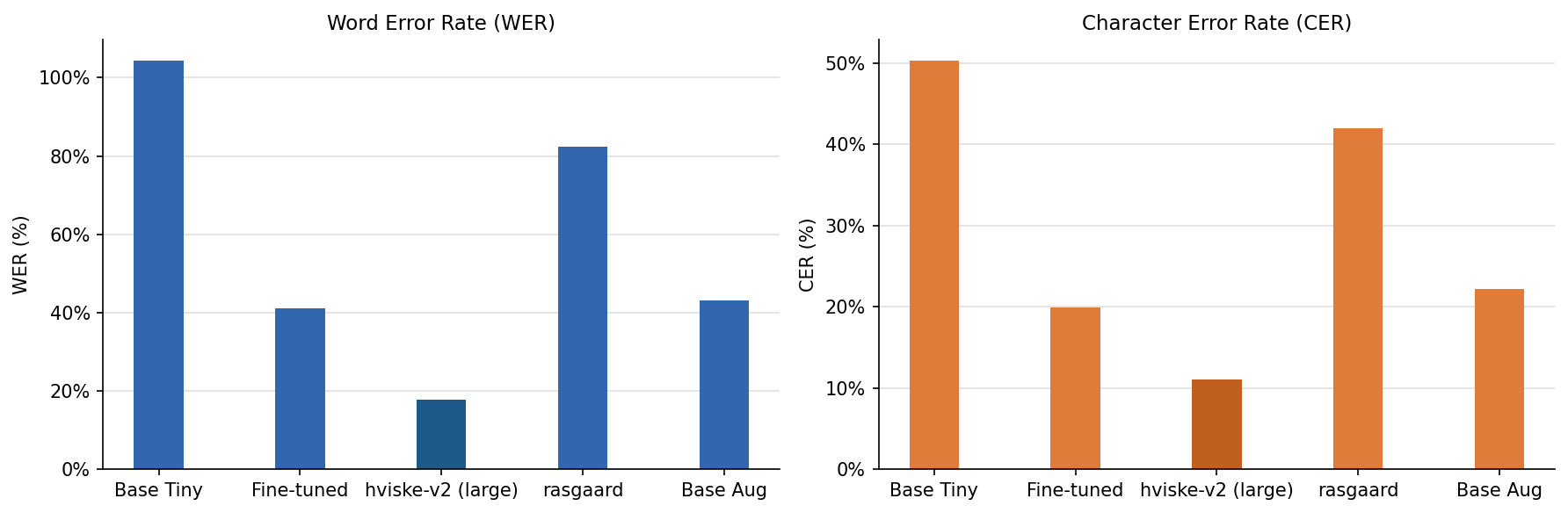

Fine-tuned OpenAI Whisper-tiny (~40M parameters) on the CoRal-v2 Danish speech corpus (~250k samples spanning a wide range of ages and dialect groups). Three training variants were explored; a frozen-encoder baseline, a data-augmented variant, and a LoRA adapter. The baseline achieves ~41% WER, down from a 104% baseline and well below the only other publicly available tiny Danish model (~83% WER). The fine-tuned model is hosted on HuggingFace and served via a Dockerized FastAPI inference API. Source code is available on GitHub.

Unified Timbre Transfer

A unified timbre transfer plugin running in the Neutone VST, applicable to any DAW. The model can perform timbre transfer on any monophonic, periodic, input and morph seamlessly between different instruments counting; violin, bassoon and trumpet. In this example we drive the plugin with a simple sine oscillator. For more information see the paper in the publications section or this blog post.

Eye-Driven Electric Guitar

As part of a semester project focused on physical modeling for audio synthesis, I developed an eye-driven electric guitar system. The instrument features string dynamics modeled through finite difference schemes, coupled with a Marshall amplifier simulation created via white-box modeling techniques. The system integrates eye-tracking technology to enable performance control, allowing users to play the virtual guitar through ocular movements alone.